DominicHamon.com

Life is like a grapefruit

Getting started with DartBox2d part 2

This is an update to this post as dartbox2d has undergone some drastic changes since that was written over a year ago.

Getting the code

The first of the main differences is that dartbox2d is now a package hosted on pub. Getting the code is a matter of having a pubspec.yaml file in the root of your project that looks something like:

name: dartbox2d-tutorial

version: 0.0.1

author: Dominic Hamon <dominic@google.com>

homepage: http://dmadev.com

dependencies:

box2d: ">=0.1.1"

browser: any"The dependencies section is the important bit. This lets pub know that this application depends on any version of box2d with a version number greater than 0.1.1. That version uses the vector_math package instead of a hand-rolled math library and runs against the latest (as of this writing) dart sdk r19447. It also depends on browser which we’ll need to pull in the script to allow the code to run both as dart and javascript.

Then, just call $DART_SDK/bin/pub install and the box2d package will be installed. If any errors about conflicting dependencies are printed, please let me know.

The HTML page

<html>

<body>

<script type="application/dart" src="tutorial.dart"></script>

<script src="packages/browser/dart.js"></script>

</body>

</html>The Dart code

At the top of the dart file, you would now import the libraries you need:

library dartbox2d_tutorial;

import 'dart:html';

import 'package:box2d/box2d.dart';Note that the box2d package actually contains two libraries; box2d.dart for use with the VM and box2d_browser.dart for use in the browser. The only difference is that the latter enables debug drawing functions using dart:html. If you’re planning on running through DartEditor, you probably want box2d_browser.

The rest of the tutorial works almost as is, though the new math library means that initializeWorld should look like:

void initializeWorld() {

// Create a world with gravity and allow it to sleep.

world = new World(new vec2(0, -10), true, new DefaultWorldPool());

// Create the ground.

PolygonShape sd = new PolygonShape();

sd.setAsBox(50.0, 0.4);

BodyDef bd = new BodyDef();

bd.position.setCoords(0.0, 0.0);

Body ground = world.createBody(bd);

ground.createFixtureFromShape(sd);

// Create a bouncing ball.

final bouncingBall = new CircleShape();

bouncingBall.radius = BALL_RADIUS;

final ballFixtureDef = new FixtureDef();

ballFixtureDef.restitution = 0.7;

ballFixtureDef.density = 0.05;

ballFixtureDef.shape = bouncingBall;

final ballBodyDef = new BodyDef();

ballBodyDef.linearVelocity = new vec2(-2, -20);

ballBodyDef.position = new vec2(15, 15);

ballBodyDef.type = BodyType.DYNAMIC;

ballBodyDef.bullet = true;

final ballBody = world.createBody(ballBodyDef);

ballBody.createFixture(ballFixtureDef);

}And the switch to dart:html leaves initializeCanvas looking like:

CanvasElement canvas;

CanvasRenderingContext2D ctx;

ViewportTransform viewport;

DebugDraw debugDraw

void initializeCanvas() {

// Create a canvas and get the 2d context.

canvas = new CanvasElement(width:CANVAS_WIDTH, height:CANVAS_HEIGHT);

document.body.append(canvas);

ctx = canvas.getContext("2d");

// Create the viewport transform with the center at extents.

final extents = new Vector(CANVAS_WIDTH / 2, CANVAS_HEIGHT / 2);

viewport = new CanvasViewportTransform(extents, extents);

viewport.scale = VIEWPORT_SCALE;

// Create our canvas drawing tool to give to the world.

debugDraw = new CanvasDraw(viewport, ctx);

// Have the world draw itself for debugging purposes.

world.debugDraw = debugDraw;

}and run as:

void run() {

window.animationFrame.then((time) => step());

}

void step() {

world.step(1/60, 10, 10);

ctx.clearRect(0, 0, CANVAS_WIDTH, CANVAS_HEIGHT);

world.drawDebugData();

run();

}Also, though the frog and dartc compilers have been replaced by dart2js so this is compiled to javascript using:

$ $DART_SDK/bin/dart2js tutorial.dart -otutorial.dart.jsThere are a host of examples in the demos folder of the DartBox2d source here.

Posted on 2013/03/06 in web | | 4 Comments

Dancin’ Fool

Every night, Mirto and I make a point of dancing with the #geekling 1. A couple of weeks ago, it was Rage Against the Machine mosh night, a few nights ago, it was slow dancing to Nina Simone, and tonight it was retro night with Cyndi Lauper’s True Colors and MC Hammer’s U Can’t Touch This. I learned a few things:*

*

- Somehow I know all the words to True Colors and U Can’t Touch This

- I remember all the dance moves to U Can’t Touch This, but while the mind may be willing, the body is not able

- MC Hammer was 2 an incredibly fit man

- I am not.

I’m taking ideas for themes for future dance nights.

Posted on 2013/02/18 in personal | Leave a comment

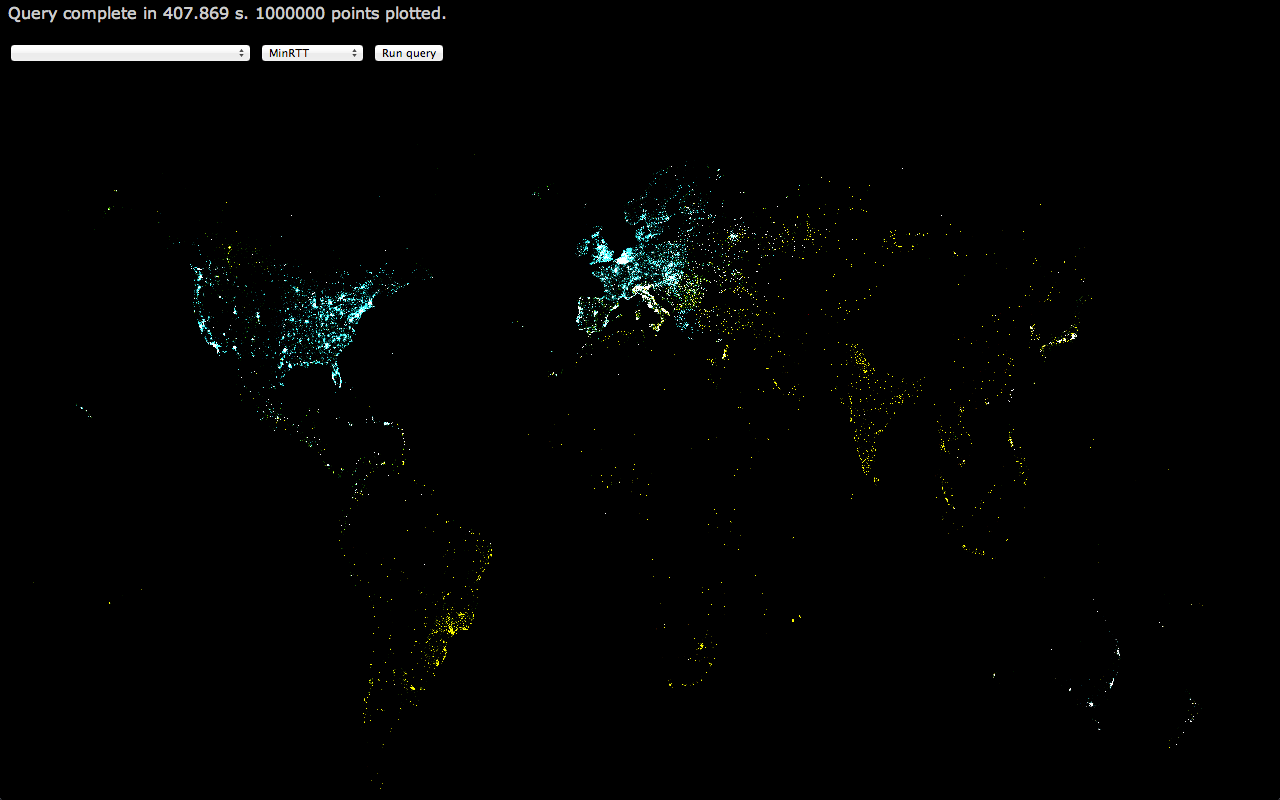

Visualizing M-Lab data with BigQuery: Part Two

When I wrote Part One, I wasn’t aware that it was going to need a Part Two. However, I was unsatisfied with the JavaScript version and the limitations incurred by trying to build a live app. I also wanted to make movies to show the time series of the data. As such, I rewrote the code in Python using the authorization technique for installed apps, and you can find that here.

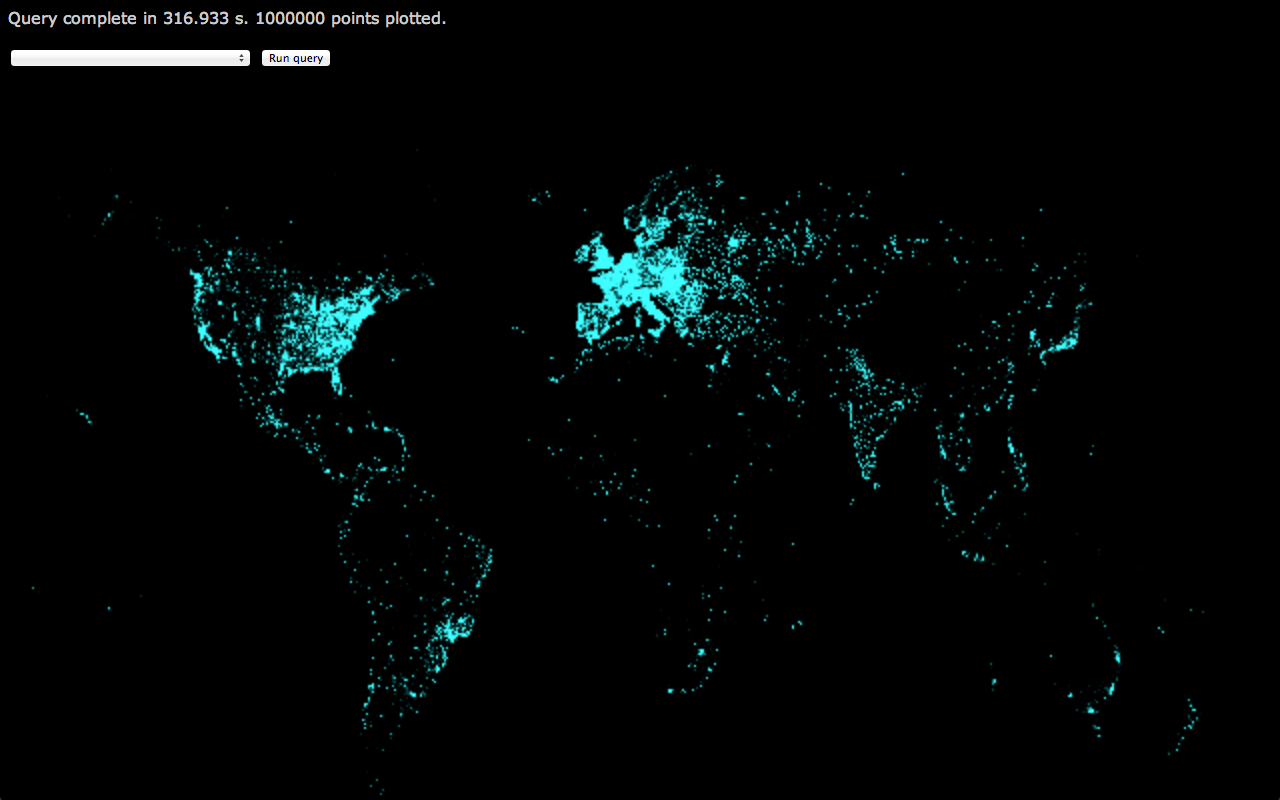

I won’t go through the code in depth, because it’s fairly explanatory. It authorizes with, and gets, a BigQuery service then loops through the tables in the m_lab datastore running a query and plotting points on a map. However, the results of running it are much more satisfactory.

If you have any questions, feel free to comment here or contact me some other way.

Posted on 2012/12/10 in visualization | | Leave a comment

Visualizing M-Lab data with BigQuery

I recently moved to a new group at Google: M-Lab. The Measurement Lab is a cross-company supported platform for researchers to run network performance experiments against. Every experiment running on M-Lab is open source, and all of the data is also open; stored in Google Cloud Storage and BigQuery.

One of the great things about having all that data open and available in BigQuery is that anyone can come along and find ways to visualize it. There’s a few examples in the Public Data Explorer, but I was feeling inspired 1 and wanted to know what it was like for someone coming to M-Lab data fresh.

I decided to build something using HTML5 canvas and JavaScript so those are the versions of the API that I’m using. However, there’s broad language support for the APIs.

OAuth2 authentication

This step actually took the longest of the whole process. There are a number of options when it comes to which type of key you need, and how you go about getting one, and the documentation is thorough but not particularly clear. Essentially you need to have view rights for the measurement-lab BigQuery project, and a Client ID created for you by one of the owners. You can ignore any documentation that talks about billing, unless you import the data into your own BigQuery project before running queries against it. However, there’s no need to do that, as a simple email to someone at M-Lab 2 you can get a key for your app and view access. This will allow you to run queries at no cost to you.

Once you have a key, it’s time to authorize:

<html>

<head>

<script src="https://apis.google.com/js/client.js"></script>

<script

src="https://ajax.googleapis.com/ajax/libs/jquery/1.7.2/jquery.min.js">

</script>

<script>

var project_id = 'measurement-lab';

var client_id = 'XXXXXXXXXXX';

var config = {

'client_id': client_id,

'scope': 'https://www.googleapis.com/auth/bigquery.readonly'

};

function auth() {

gapi.auth.authorize(config, function() {

$('#status').html('Authorized..');

gapi.client.load('bigquery', 'v2', function() {

$('#status').html('BigQuery client initiated.');

$('#auth_button').fadeOut();

});

});

}

function initialize() {

if (typeof gapi === 'undefined')

$('#status').html('API not available. Are you connected to the internet?');

$('#status').html('Client API loaded.');

$('#auth_button').fadeIn();

}

</head>

<body onload="initialize();">

<div id="status">Waiting for client API to load</div></br>

<button id="auth_button" style="display:none;" onclick="auth();">

Authorize

</button>

</body>

</html>With this, you should be able to hit the Authorize button, enter a Google account (required for tracking by BigQuery, I believe) and load the BigQuery API library. The Google account you use will have to have accepted the BigQuery terms of service, which involves logging in to the BigQuery site and clicking through the login.

Note, this is a bit of a pain for a multiple-user web application. However, there is the option to set up a server-to-server authorization flow which removes this difficulty. Similarly, I believe native installed applications have a different route for authorization but I haven’t looked into it yet.

Your first query

For the purposes of this post, I wanted to get every distinct test that had been run in a month and plot a point at the latitude and longitude of the client’s location. By making the plotted pixel semi-transparent I could use additive blending to make things glow a bit, and easily see areas where multiple tests had run.

I’ll assume you know how to add a canvas to the page and draw to pixels. I’ll focus instead on actually running the query.

function runQuery() {

var query =

'SELECT project,log_time,' +

'connection_spec.client_geolocation.latitude,' +

'connection_spec.client_geolocation.longitude,' +

'web100_log_entry.is_last_entry ' +

'FROM m_lab.2012_10 ' +

'WHERE log_time > 0 AND ' +

'connection_spec.client_geolocation.latitude != 0.0 AND ' +

'connection_spec.client_geolocation.longitude != 0.0 AND ' +

'web100_log_entry.is_last_entry == true ' +

'ORDER BY log_time';

var request = gapi.client.bigquery.jobs.query({

'projectId': project_id,

'timeoutMs': '45000',

'query': query

});

$('#status').html('Running query...');

var startTime = new Date().getTime();

request.execute(function(response) {

var duration = (new Date().getTime()) - startTime;

$('#status').html('Query complete in ' + duration / 1000 + ' s.');

... <clear canvas> ...

$.each(response.result.rows, function(i, item) {

var latitude = parseFloat(item.f[3].v);

var longitude = parseFloat(item.f[4].v);

// Normalize to canvas

var canvas_x = w * (longitude / 360.0 + 1 / 2);

var canvas_y = h * (latitude / 180.0 + 1 / 2);

var canvas_x_int = Math.floor(canvas_x);

var canvas_y_int = Math.floor(canvas_y);

// Flip y for canvas

var i = (canvas_x_int + (h - canvas_y_int) * w) * 4;

... <draw pixel i> ...

});

});

}There’s a few things to note here, but the main one is that this is a synchronous call and will timeout and return no results if it takes longer than 45 seconds. It will also return a maximum of 16000 rows. There are ways to remove both of these restrictions which we’ll get to later.

Also, the key to getting values from each row is in the item.f[3].v lines. The index there comes from the order of fields requested in the SELECT statement.

Another thing to note is that longitude and latitude could be projected using Mercator or Equirectangular projections.

Removing the restrictions

Both the synchronicity of the call and the timeout can be solved by polling for the query to complete using the jobs.getQueryresults method.

With this change, the code above looks something like:

function runQuery() {

startIndex = 0;

var query =

'SELECT log_time,' +

getColorField() + ',' +

'connection_spec.client_geolocation.latitude,' +

'connection_spec.client_geolocation.longitude,' +

'web100_log_entry.is_last_entry ' +

'FROM m_lab.2012_10 ' +

'WHERE log_time > 0 AND ' +

'connection_spec.client_geolocation.latitude != 0.0 AND ' +

'connection_spec.client_geolocation.longitude != 0.0 AND ' +

'web100_log_entry.is_last_entry == true ' +

'ORDER BY log_time LIMIT ' + maxPoints;

var request = gapi.client.bigquery.jobs.query({

'projectId': project_id,

'timeoutMs': pollTime,

'query': query

});

$('#status').html('Running query');

... <clear canvas> ...

startTime = new Date().getTime();

request.execute(pollForResults);

}

function pollForResults(response) {

if (response.jobComplete === true) {

if (startIndex == 0)

$('#status').html('Receiving data');

startIndex += response.rows.length;

plotResponse(response);

}

if (startIndex == parseInt(response.totalRows)) {

var duration = (new Date().getTime()) - startTime;

$('#status').html('Query complete in ' + duration / 1000 + ' s. ' +

response.totalRows + ' points plotted.');

return;

}

if (typeof response.error === 'undefined') {

request = gapi.client.bigquery.jobs.getQueryResults({

'jobId': response.jobReference.jobId,

'projectId': response.jobReference.projectId,

'timeoutMs': pollTime,

'startIndex': startIndex

});

request.execute(pollForResults);

} else {

$('#status').html('ERROR: ' + response.error.message);

}

}As you can see, instead of having a long timeout, we have a short one. When the callback fires, if the job is not complete, we poll again with the same short timeout. This continues until the job succeeds (or an error is returned). Note, this means that even jobs that apparently timeout can remain running and accessible from the API. If you want to cancel a job you need to delete it using the API.

So what do you get when you run this for 1 million points?

The points are a little fuzzy as I was using CSS to scale up the canvas for a cheap blur effect.

What else?

This is pretty enough, but the M-Lab data includes some terrific data regarding the innards of TCP states on client and server machines throughout the tests that are run. This is thanks to the Web100 kernel patches that run on M-Lab server slices. With those, it would be possible to map out areas where congestion signals are more common, or the distribution of receiver window settings. Or try to find correlations between RTT and the many available fields in the schema.

As another simple example, by plotting short RTT in blue, medium RTT in green, and long RTT in red (and removing the blur), you get something like:

If you look at the full-res version, you can see the clusters of red pixels across India and South East Asia.

This is immediately useful data: Given the number of tests that are run in the area (the density of points), and the long RTT we’re seeing from there, it would make sense to add a few servers in those countries to ensure the data we have on throughput and congestion for that area is not being skewed by long RTT. Similarly, we can feel good about our coverage across Europe and North America, though the less impressive RTT in Canada should be investigated.

More?

Almost certainly, but I’m out of time on this little weekend project hacked together between jaunts around Boston. Someone smarter than me can probably combine the fields in the m_lab table schema in ways I haven’t considered and draw out interesting information. Similarly, the live version 3 could support zooming and panning of the map, and more flexibility in setting the query from the UI.

Lastly, if you want to start playing around with >630TB of network performance data, let me know and I’ll see what I can do.

- Mostly by this post from Facebook ↩︎

- Hint: Try dominic at measurementlab dot net ↩︎

- I did mention that there’s a live version right? ↩︎

Posted on 2012/11/19 in visualization | | Leave a comment

Don’t fuck it up

Four years ago, Mirto and I arrived in the US from Singapore. When we boarded the plane at Changi International airport we didn’t know who would be president when we landed. When we arrived at San Francisco International airport, the ebullience and jubilation made it clear that a horrible mistake had been avoided.

I’d like to think that today, someone is about to arrive in the US and they won’t know who will be president when they land. For their sake, if not the sake of the millions of Americans, temporary, and permanent residents who share this country, make the right call.

First of all, vote. Even if your political views don’t match mine, vote. There are so many people who don’t have the right to have their voice heard in this world, and you do. Use it, don’t waste it.

Secondly, vote responsibly. If you can figure out the policies behind the posturing and can filter out the bullshit and the sound-bites, get a sense of what each candidate would bring to the presidency, and what their residency in the White House would bring to the country, and to you.

Lastly, don’t vote for the nutjob. You have two realistic choices: An incumbent that has had a measurably successful presidency, or a candidate who has said whatever is necessary in the moment to win over the people he’s speaking to. That candidate who, whenever he speaks, shows how out of touch he is with the people who live in this country. The candidate who, whenever he speaks, is inconstant and often downright dangerous for the rights of half the people who live in this country.

Vote. And vote Obama.

Don’t fuck it up for those of us who can’t.

Posted on 2012/11/06 in personal | | 1 Comment

Living in a Box

On a recent trip to IKEA a few sweet toys were purchased along with baskets to put them in.

Guess which was the most popular.

Posted on 2012/09/23 in personal | | Leave a comment

Embedding the Dart VM: Part One

The Dart VM can be run as a standalone tool from command-line, and is embedded in a branch of Chromium named Dartium, but all of the public instructions I could find only discuss how to extend the VM with native methods. Definitely useful, but there are some applications when it would be useful to directly embed the VM in a native executable. For instance, if a game wanted to use Dart as an alternative to Lua.

The instructions for adding native extensions recommend building the extension as a shared library that the VM can load. We need to flip that model around and build the VM as a static library that our native executable can link against. This, part one of a series of who-knows-how-many, will focus on getting the source and building a native executable with the VM embedded. We won’t expect to actually run a script just yet, but by the end of this blog we will be loading and compiling a script.

Getting and Building the VM

The instructions for getting all of the Dart source (including the editor, VM, and runtime libraries) are here.

Once we have the source, we need to build the VM as a static library instead of the default. gyp is used to generate the Makefile, and it already includes a static library target for the VM. Sadly make doesn’t seem to be fully supported on OSX, given the pile of compiler errors that I hit, but opening the xcode project and building the dart-runtime project directly does work. I needed to tweak a few of the settings in the xcodeproject as it was set up to use GCC 4.2, which I don’t have, and I wanted the Debug build to be unoptimized for easy debugging, but eventually I had a few shiny static libs to play with.

Quite a bit is hidden in that ‘eventually’. There are a number of subtleties around linking in generated source files to get a snapshot buffer loaded that contains much of the core libraries. There’s also the issue of initializing the built-in libraries; the VM source contains some files that handle this, but it’s unclear which should be included and which shouldn’t. Similarly, some libraries are name with a _withcore suffix, and it’s not clear whether they are the ones that should be linked in or not.

Loading a script

To load a script we need an Isolate and some core libraries loaded to do things like resolve the path to the script, read the source, and compile it. Eventually, when we come to run, we’ll have to register some native methods, but for now just loading the core, io and uri libraries is a good start.

It took a while, but the end result is both exciting, and suggests the next step that’s required:

Dart Initialized

LoadScript: helloworld.dart

CreateIsolate: helloworld.dart, main, 1

Created isolate

Loaded builtin libraries

About to load helloworld.dart

LoadScript: helloworld.dart, 1

Script loaded into 0x100702590

Invoking 'main'

Invoke: main

Failed to invoke main: Unhandled exception:

UnsupportedOperationException: 'print' is not supported

#0 _unsupportedPrint._unsupportedPrint (dart:core-patch:2525:3)

#1 print (dart:core-patch:2521:16)

#2 main (file:////~/git/embed-dart-vm/helloworld.dart:4:8)DoneThere was one rather nice step where I was getting an error compiling the test Dart script. After spending a couple of hours debugging the code it turned out that I actually had a syntax error in the Dart script. I should have trusted my code after all!

Next time we’ll figure out those native extensions, built-in libraries, and get the script running.

Full source for this project can be found here and the version that this post describes is here.

Posted on 2012/09/17 in Uncategorized | | 2 Comments

Castle in the cloud

As I get to grips with thinking about the future with the weighty responsibility of another actual person depending on me for survival and development, it’s tempting to attempt to recreate my childhood for her.

My childhood was pretty special, as it happens. I grew up on a small island, with close friends living nearby, opposite a beach, with plenty of opportunities for spending time outside exploring castles and inside with various musical instruments. However, I also spent a significant fraction of my time tinkering with the latest and greatest technology. The BBC Micro B, the Acorn Archimedes, the Super Nintendo, and eventually an IBM PC with the ground-breaking Intel 286 chip were all available to me to code on and play with. My friends and I experimented with networking, digital music, and ran a BBS dedicated to the Acorn Archimedes1 from first a 2400 baud, then a 9600 baud modem. All of these things added up to a lifelong love of doodling around with technology, computers, and video games.

So it is tempting to find all of these things and introduce my ward to technology through them. I have a NES, and a Sega Genesis, and I wouldn’t mind a nostalgic trip down the Acorn lane. However, it occurred to me that this misses the point entirely: Aside from the possibility2 that she turns out to not be interested in technology, what was important about my childhood was the access to the latest technology, not any particular technology. It is, of course, true that the BBC Micro and Archimedes were well suited to the experimenter and the hobby programmer, and I don’t think I would have ended up as a career programmer without my time spent filling the screens of the computer in the physics classroom with ‘DOM IS COOL’. The technology that is around today is so far removed from that if I was to start learning to program today on a BBC Micro, and then try to develop a full web application, or a mobile game, I’d be hopelessly behind the curve. By the time I’d caught up, technology would have moved on and I’d have no chance to grow and develop with it.

Instead, then, I consider it my duty to ensure my progeny has access to the latest technology and, should she show any interest towards developing games or applications, will seize that opportunity with both hands and encourage her. If this means I have to keep the latest gadgets around just in case, so be it3. Right now, this means mobile development platforms, the ubiquitous cloud, and the web. I have no idea what it will be in a few years, but whatever it is, my scion will have access to it.

Clearly, it is equally important to expose her to the latest in gaming platforms and video games, just so she experiences the cutting edge. And I’ll have to spend time with those platforms and games too, so that I’m as familiar with them as she will be.

Oh yeah, and I should work on that whole ‘spending time outside’ thing too I suppose. But first, I have a 6502 computer to build.

- named Archetype, naturally ↩︎

- Extremely unlikely possibility. ↩︎

- We all, as parents, have sacrifices to make. ↩︎

Posted on 2012/05/16 in personal | | Leave a comment

Sweet Child O’ Mine

Today is the last day I won’t be a parent. I feel like I should write something about this, about how it feels, but I’m not sure I can even begin to collect and analyse all the busyness in my head. So where to start?

Firstly, I thought I’d be anxious, but I’m not particularly. I mean, I’m generally anxious in the sense that I hope everything goes smoothly and all parties end up healthy, but I’m also prepared enough to know that ‘going smoothly’ is a relative term when it comes to childbirth and everyone’s health is both all that matters, and exactly what everyone involved will be focused on. So I can, to an extent, rationalize away the anxiety.

I also feel like I should be excited, and of course I am a bit, but I don’t really have the time to focus on that excitement, to celebrate it. Instead, there are things that need to get done today, and other people who need my focus and attention, and it’s not a bad thing to not outwardly show excitement. For those involved in the day that are more anxious or focused on keeping everyone healthy, my excitement is not going to be all that helpful. Also, I’m British; excitement tends to show itself as having a hobnob instead of a digestive with my cup of tea.

How does it feel, then, to know that everything will have changed by tomorrow? And make no mistake: Everything will have changed. It feels expected. It feels normal. I’ve had nine months to feel anxious, and to worry, to be excited and dream of all the things I’ll do with my daughter1. Her room is ready, or at least the half a room that has been prepared for her is ready, we have clothes for her no matter what size she ends up being, and washing and folding them has made it not unusual to see them around. If anything, it feel frustrating that she’s not already here. That she hasn’t been here for the last month and instead has chosen to be stubborn and stay inside when she could be out here interacting with us.

I truly cannot wait any longer to meet her.

PS – It’s also Pi Day and the anniversary of Einstein’s birthday, which is irrelevant for the purposes of this post, unless my kid ends up being born today, in which case it’s a most excellent fact.

- I may have just gotten something in my eye as I wrote this. Hang on. ↩︎

Posted on 2012/03/14 in personal | | 1 Comment

Benchmarking DartBox2d

Joel Webber wrote this excellent blog post in which he tests native versions of Box2D against Javascript implementations. Perhaps unsurprisingly, he discovered that native code is around 20 times faster than JavaScript.

Having just released DartBox2d, I was curious to see how Dart stacks up against these results. It should be noted that the Dart version has diverged a little from the original port to make it more Dart-like. My measurements didn’t show any significant performance change between the current version and the initial port.

I’m using the same test as Joel, taken from his github repo, and have committed the Dart source used back into the tree, so you can check it out here. The JavaScript there, and used below, was generated using frog rather than dartc as it generates smaller, more readable output. The Dart VM does not currently support any references to dart:dom or dart:html so running those required some massaging of the code. Specifically, commenting out all of dartbox2d/callbacks/CanvasDraw.dart and removing all references to Canvas from Bench2d.dart.

All of the data and the graphs can be seen here.

JavaScript

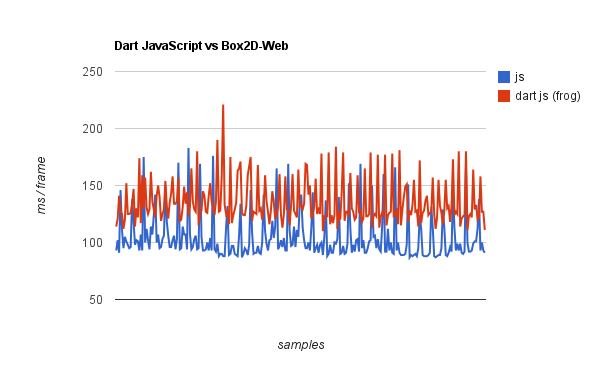

First, JavaScript generated from Dart using frog vs hand-written Box2D-web JavaScript:

This is on a linear scale, unlike Joel’s graphs, as the difference between the traces is much smaller. However, the raw frame times are higher, which is probably due to the different machines we’re running on. The results, though, are still clear: Box2D-web runs at an average 104 ms/frame while the JS generated by frog from Dart is running at 135 ms/frame. There’s significant variation in both implementations (standard deviation is ~18 – 19 ms in both cases) which is either inherent in the simulation or indicates garbage collection running.

Native

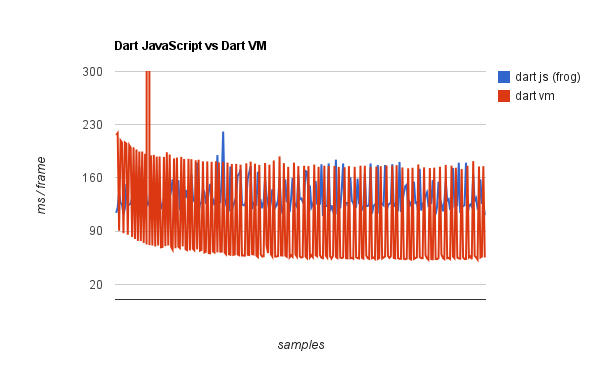

Given the difference Joel saw between the Java VM and Javascript, with the Java VM running 10 times faster than JavaScript, it is tempting to compare Dart compiled to JavaScript with Dart running natively in a VM in Dartium.

There’s a massive 4800 ms frame that I had to cut off to see detail across all the samples. I think this is some part of the VM being initialized and blocking the process, but it’s hard to tell.

There’s some other really interesting things to note here. Firstly, the VM performance improves over time, which is not something that I’ve seen in other tests. It’s also faster than the generated JavaScript and the hand-written Box2D-Web JavaScript at it’s fastest, however there is massive variance due to a periodic slowdown. It’s running at an average 119 ms per frame but the standard deviation is a massive 300 ms. I haven’t looked into the Dart VM but I’m going to throw out a guess that this is some garbage collection kicking in every few frames.

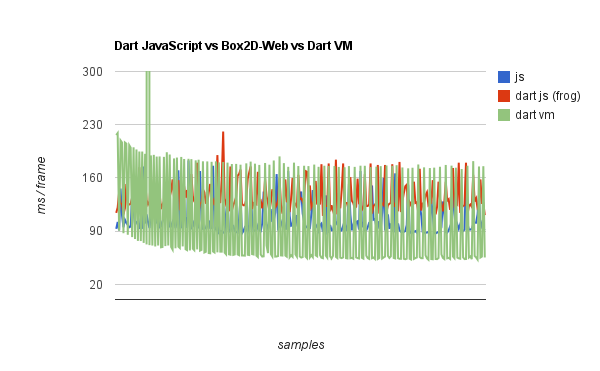

Summary

Here are all three results together for comparison:

With a little optimization work in DartBox2d, and maybe a little work on the code generation in Dart, I think it’s possible to get Dart-generated-JavaScript to get close to the performance of hand-written JavaScript. However, it’s also clear that the Dart VM, even in its current state, has the potential to outperform both.

Posted on 2012/01/27 in web | | Leave a comment

Recent Posts

- London Calling

- The Rise and Fall of Ziggy Stardust

- The only winning move is not to play

- s/GOOG/TWTR/

- URL shortener in go

- All the small things

- gomud

- Hole hearted

- Typed data for performance boost

Archives

- April 2018

- May 2015

- August 2014

- February 2014

- January 2014

- November 2013

- September 2013

- June 2013

- May 2013

- March 2013

- February 2013

- December 2012

- November 2012

- September 2012

- May 2012

- March 2012

- January 2012

- September 2011

- May 2011

- April 2011

- February 2011

- January 2011

- December 2010

- November 2010

- October 2010

- September 2010

- August 2010

- July 2010

- June 2010

- May 2010

- April 2010

- March 2010

- February 2010

- January 2010

- December 2009

- November 2009

- October 2009

- July 2009

- May 2009

- April 2009

- March 2009

- February 2009

- January 2009

- December 2008

- November 2008

- October 2008

Search

Search

Copyright © Dominic Hamon 2021. WordPress theme by Ryan Hellyer.